Gemini Omni: What We Know About Google's Leaked Video Model Ahead of I/O 2026

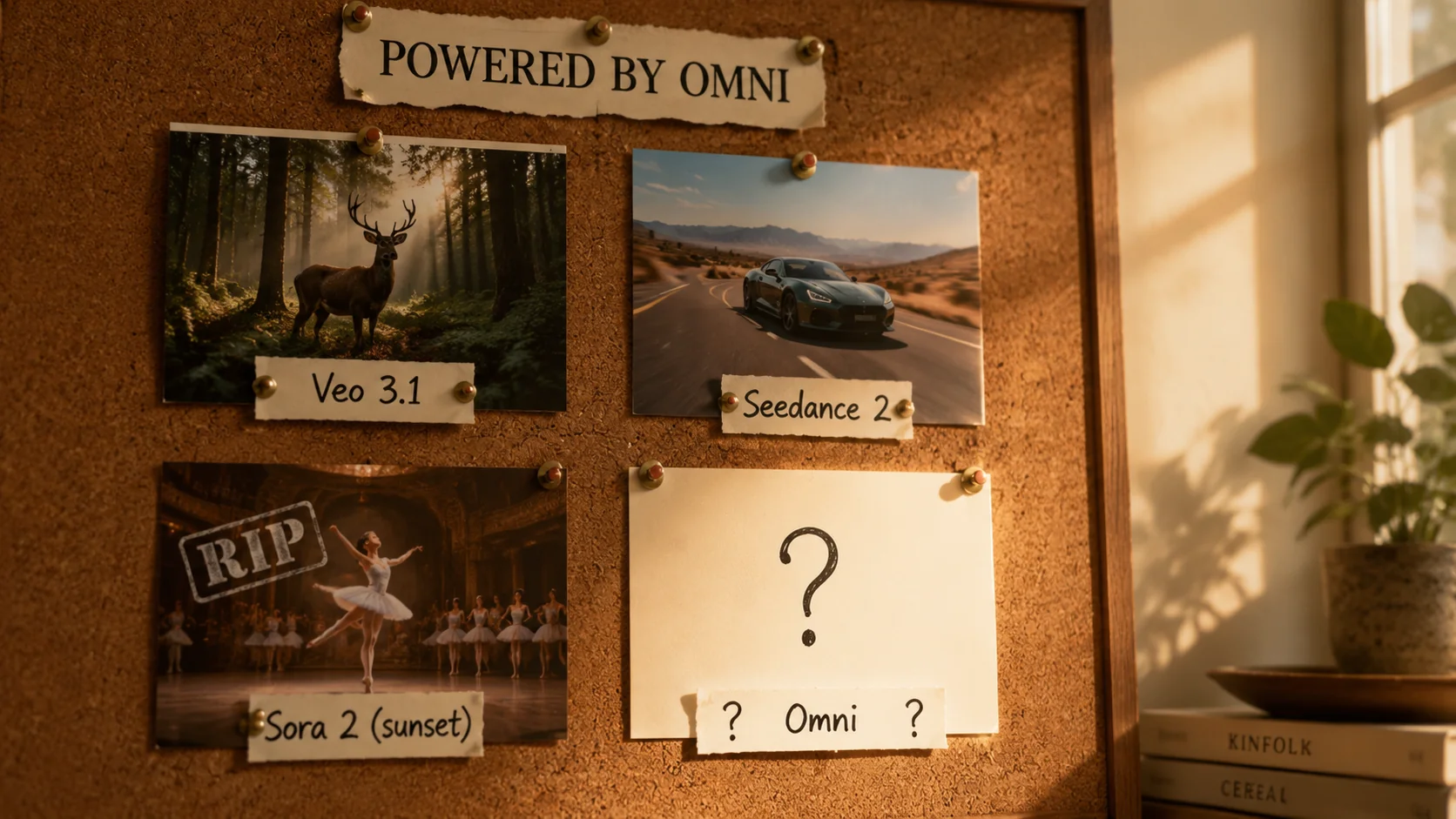

Google's Gemini Omni video model surfaced in a leaked UI string ahead of I/O 2026 (May 19–20). Here's what's confirmed, what's rumor, three plausible interpretations, and how Omni would compare to Veo 3.1, Seedance 2, Kling 3.0, and the now-sunsetting Sora 2.

Gemini Omni: What We Know About Google's Leaked Video Model Ahead of I/O 2026

Eight days before Google I/O 2026, a UI string surfaced inside Gemini's video generation tab that has the AI video community paying very close attention: "Start with an idea or try a template. Powered by Omni."

That single line — first spotted by user Thomas16937378 on X and amplified by TestingCatalog on May 2, 2026 — is the strongest signal yet that Google is preparing to ship a new video generation model under the name Gemini Omni, alongside (or in place of) the current Veo 3.1-powered "Toucan" pipeline that Gemini uses today.

This post separates what's actually been leaked from what's rumor, walks through the three plausible interpretations of "Omni," compares it to the rest of the AI video field — Veo 3.1, Seedance 2, Kling 3.0 — and shows where Kubeez fits whether or not Omni ships at I/O on May 19–20.

#What's actually been confirmed (and what hasn't)

Let's be precise about evidence, because the search results for "Gemini Omni" are already filling up with speculation dressed as fact.

Confirmed by the leak:

- The literal placeholder string "Start with an idea or try a template. Powered by Omni." appears inside Gemini's video generation tab.

- The string sits next to "Toucan," the internal codename for Gemini's existing video tool — which is currently powered by Veo 3.1.

- The leak surfaced on May 2, 2026, roughly two weeks before Google I/O 2026 (May 19–20), the conference where Google has confirmed Gemini and AI updates are on the agenda.

Not yet confirmed by Google:

- Resolution, clip length, frame rate.

- Whether Omni generates audio natively (the way Veo 3.1 does).

- Whether Omni is text-to-video, image-to-video, or both.

- Pricing or per-second cost.

- Public availability — consumer Gemini app, Vertex AI, or both.

- Whether "Omni" is a new model at all, versus a new product surface over an existing one.

If a piece you read online quotes specs (8K, 60 FPS, 60-second clips, "powered by Gemini 3 Ultra," etc.), it's speculation. The leaked surface area is one UI string. Treat everything else as a guess until Sundar Pichai walks on stage on May 19 — including the Kubeez post you're reading right now.

#Three plausible interpretations of "Omni"

Coverage from TestingCatalog, WaveSpeedAI, and AIPlanetX converges on three possibilities. None are mutually exclusive.

#1. Omni is a new public name for the Veo-powered pipeline

Easiest interpretation: Google is simply rebranding the consumer-facing video surface. "Toucan" was always an internal codename. "Powered by Omni" could be Google polishing the consumer story before I/O, with Veo 3.x still doing the work underneath. This would be a marketing move, not a new model.

#2. Omni is a new Gemini-trained video model alongside Veo

More interesting: Omni is a separate model trained inside the Gemini family — analogous to how Nano Banana 2 (Gemini 3.1 Flash Image) and Nano Banana Pro (Gemini 3) are Google's image models trained on the Gemini stack rather than DeepMind's Imagen lineage. In this scenario, Veo stays the cinematic flagship for high-end creative work, while Omni serves the in-app, conversational, "describe a video and get one" use case at consumer speed and cost.

#3. Omni is a single multimodal model that handles image and video together

The name itself — "Omni" — points here. It mirrors the naming pattern OpenAI used with GPT-4o ("o" for omni) to signal a single model that natively handles multiple modalities. If this is the play, Omni would generate video, image, and audio from a unified Gemini-architecture model, with the long context window Gemini is already known for. Upload a 50-page script, get back consistent characters and lighting across an entire generated sequence.

This is the version of Omni that would actually shake the market — because nobody else ships unified multimodal video + image + audio in one model today. Veo + Imagen + Lyria sit in separate pipelines at Google. Sora was video-only. Seedance and Kling are video-only. Even OpenAI's GPT-4o image generation lives in a separate execution path from its video work.

A true omni-model would change how creators think about prompting — less "generate a clip, generate art, edit them together," more "describe the scene end-to-end."

#How Omni would fit into the AI video landscape

To understand why this leak matters, you need the current map of AI video as of May 2026.

Veo 3.1 (Google) is the current cinematic benchmark. Strong physics, native audio with dialogue, scene extension, up to 3 reference images for character consistency, 4–8 second clips at 720p or 1080p with 4K upscaling. It powers Gemini's "Toucan" tab today and is available on Kubeez — see our Veo 3.1 deep dive.

Seedance 2 (ByteDance) currently tops several public video-gen benchmarks for prompt adherence and motion quality at a fraction of flagship pricing. Audio is on by default. Available on Kubeez in two tiers — Seedance 2 and Seedance 2 Fast.

Kling 3.0 (Kuaishou) is the premium-motion option for high-end creative work. Stronger motion handling than most competitors, with native audio in 2.6 and longer-form support. See Kling 3.0 vs 2.6.

Sora 2 (OpenAI) is, as of March 2026, a sunset story rather than a competitor. The consumer Sora app is shutting down and developer APIs are reportedly winding down too. The slot Sora 2 occupied — "viral consumer video gen with social distribution" — is now wide open. Omni's leaked positioning inside Gemini (not as a separate app) reads as a deliberate move into that vacated space.

If interpretation #3 is correct and Omni really is a unified omni-model, Google's competitive pitch becomes: "One model, one prompt, one app, one bill — for everything you'd otherwise stitch together from Veo, Imagen, ElevenLabs, and a video editor." That's a bigger threat to fragmented stacks than any single-modality competitor.

#When and where Omni will likely launch

The strongest signal points to Google I/O 2026 on May 19–20. Google has confirmed Gemini and AI updates are on the keynote agenda, the leak is recent enough that Google clearly didn't intend to surface it yet, and "Powered by Omni" is the kind of placeholder you'd ship two weeks early by accident — not two months.

Most likely first surfaces:

- The Gemini consumer app (web and mobile), as a tab inside the existing video generation surface.

- Vertex AI for developers, possibly with the same "Veo + Omni" split that Google does for "Imagen + Nano Banana" on images.

- A Gemini API endpoint, billed per-second like Veo.

Less likely but possible:

- A new standalone "Gemini Studio for Video" surface — Google has shown a willingness to spin up consumer-creative apps (Whisk, NotebookLM) when they want a focused product story.

We'll update this post the day Google announces, with verified specs.

#What Kubeez creators should actually do this week

Three honest pieces of advice:

1. Don't pause your roadmap waiting for Omni.

If you're shipping content this week, ship it on what works today: Veo 3.1 for cinematic, Seedance 2 for fast iteration with audio, Kling 3.0 for premium motion. All three are on Kubeez video generation right now and produce the same caliber of output regardless of what gets announced May 19.

2. Plan to A/B Omni against your current model the day it ships.

The right way to evaluate any new video model is the same brief on the same day on a stack you control. Run your standard test brief — a 6-second product demo, a 4-second cinematic shot with dialogue, a vertical 9:16 social clip — through Omni and through your current daily driver. Let the output decide, not the launch keynote.

3. Stay on a multi-model platform.

This is the lesson the Sora 2 shutdown drove home for a lot of teams: betting an entire content workflow on one vendor's roadmap is fragile. The teams that ate the Sora 2 sunset cleanly were the ones already running on platforms with multiple video models — they migrated by switching a dropdown, not by rebuilding their pipeline.

Kubeez runs Veo 3.1, Seedance 2, Seedance 2 Fast, Kling 3.0, Kling 2.6, plus image models (Nano Banana 2, Nano Banana Pro, gpt-image-2, Flux, Z-Image), audio (music, dialogue, voice cloning), and captions in one workspace with one credit pool. If Gemini Omni ships and we're impressed, it joins the catalog. If not, you've lost nothing.

#What we'll be watching for at I/O on May 19

When Google takes the stage on May 19, here's the short list of questions that actually matter for creators and developers:

- Is Omni a new model or a new wrapper? Specifically: does it ship with a new model card and a new benchmark suite, or is it just a tab rename?

- Is it natively multimodal? Does the same prompt produce video + audio + reference image consistency, or does it still call out to separate Imagen / Lyria pipelines under the hood?

- What's the clip length and resolution ceiling? Veo 3.1 caps at 4–8 seconds and 1080p (with 4K upscale). Sora was demoing 60 seconds before its sunset. The Omni cap will tell us a lot about who Google is targeting.

- Is audio in the same pass as video? Veo 3.1's strongest feature is single-pass audio + video with lip sync. If Omni keeps that and adds music or dialogue control, it's an upgrade. If it splits them, it's a step back.

- Is there a Vertex AI endpoint at launch, or consumer-only? Determines how fast it can land on platforms like Kubeez.

- What does it cost per second? Veo 3.1 is premium-priced. If Omni undercuts that, it puts pressure on the whole market.

We'll update this post the moment those questions get answered.

#FAQ

Is Gemini Omni officially announced? No. As of May 11, 2026, the only public evidence is a leaked UI string ("Powered by Omni") inside Gemini's video generation tab, first reported May 2, 2026. Google has not confirmed the model, its capabilities, pricing, or launch date. The most likely announcement window is Google I/O 2026 on May 19–20.

Is Gemini Omni available on Kubeez? No — because it isn't publicly available anywhere yet. If Google ships Omni at I/O 2026 with a Vertex AI endpoint, we'll evaluate it for the Kubeez video catalog the same way we evaluated Veo 3.1, Seedance 2, and Kling 3.0. Subscribe to the Kubeez blog or check the AI models guide for updates.

How is Omni different from Veo 3.1? We don't know yet. The leading theories (none confirmed) are: (1) Omni is the new public name for the same Veo-powered pipeline; (2) Omni is a separate Gemini-trained video model that runs alongside Veo; or (3) Omni is a unified multimodal model that handles image, video, and audio in a single generation pass — analogous to how GPT-4o handles text, image, and audio together.

Should I switch from Veo 3.1 or Seedance 2 to wait for Omni? No. Veo 3.1, Seedance 2, and Kling 3.0 are shipping today and producing professional-grade output. Don't pause a content roadmap on a leaked UI string. When Omni launches, A/B it against your current stack on the same brief — let the output decide, not the keynote.

Will Gemini Omni replace Sora 2? Sora 2's consumer app is already shutting down (announced March 2026 — see our coverage). The "viral consumer AI video" slot Sora 2 occupied is open. Omni's leaked surface is inside the Gemini consumer app, which is consistent with Google moving into that vacated space — but whether it does so successfully depends on the model itself, which we'll know on May 19.

#Bottom line

Gemini Omni is real enough to plan around, but not yet real enough to bet on. The leak is credible — a UI string surfaced on a live Gemini surface, two weeks before a known Google keynote — but the specs are still vapor. The smart play this week is to keep shipping on Veo 3.1, Seedance 2, and Kling 3.0, and be ready to test Omni against them the day it launches.

When that day comes, Kubeez video generation will have whatever wins on its merits — alongside the rest of the model catalog you already use.

For the broader landscape of which models to use for which job, see the AI models guide and the text-to-video workflow primer. For the model that'll be most directly compared to Omni at launch, read the Veo 3.1 deep dive.

See also