How AI Generation Works: From GANs to Autoregression

A deep dive into the technology behind AI: how GANs, Autoregression, and Diffusion models generate images, video, and sound.

How AI Generation Works: From GANs to Autoregression

Have you ever wondered how an AI can take a simple text prompt and turn it into a breathtaking landscape, a cinematic video, or a chart-topping melody? It's not magic—it's math, architecture, and a whole lot of data.

Welcome to our deep dive into the technology of AI generation. Today, we're going to break down the "Big Three" architectures and how they apply to the media you create every day.

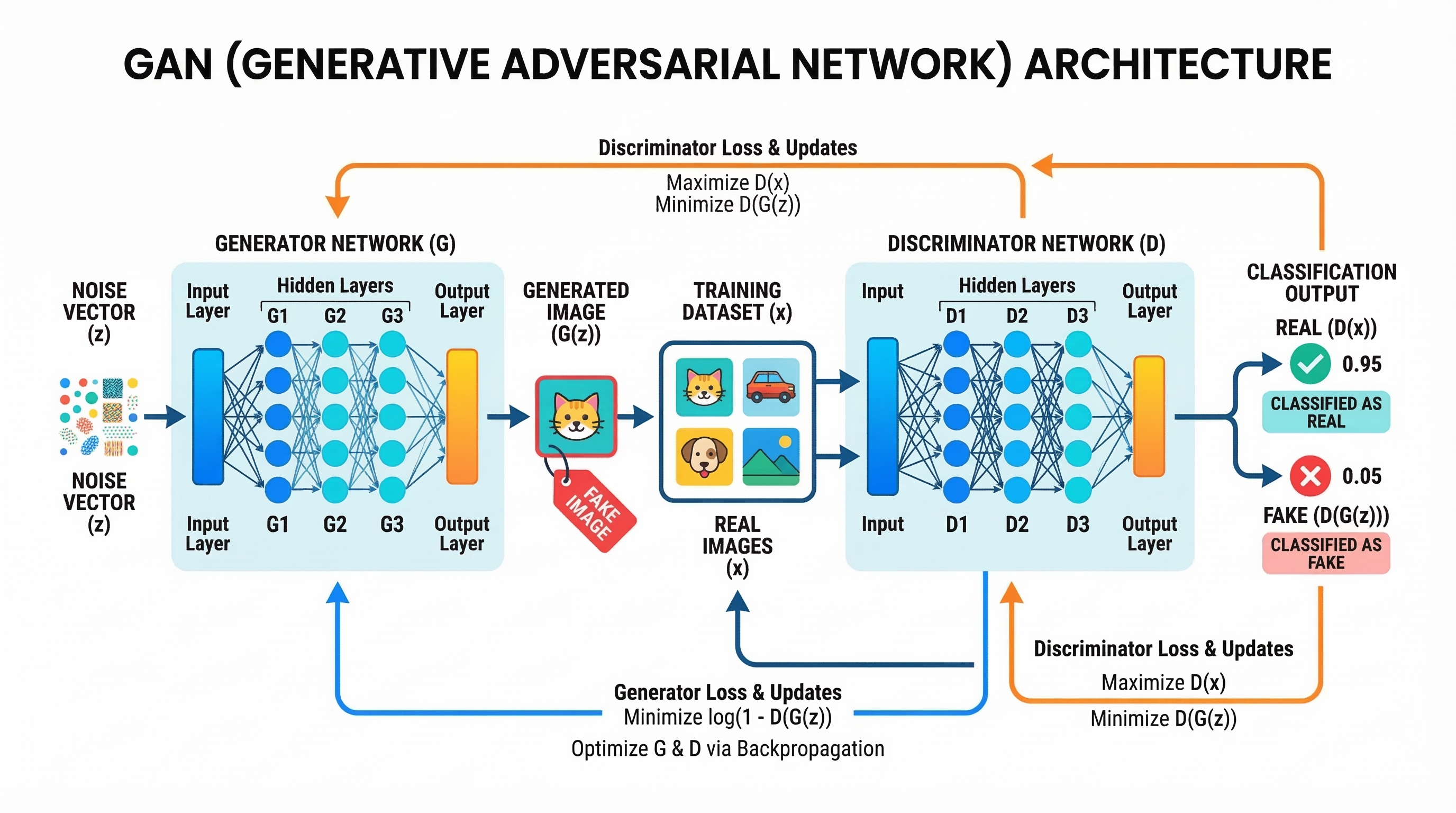

#1. The Duel of the Networks: GANs (Generative Adversarial Networks)

In the early days of modern AI (around 2014), GANs were the undisputed kings of image generation.

Think of a GAN as a high-stakes competition between two AI networks:

- The Generator: Its job is to create fake images that look real.

- The Discriminator: Its job is to distinguish between real images (from a dataset) and fake images (from the Generator).

As they compete, both get better. The Generator learns how to "fool" the Discriminator, while the Discriminator learns to spot the tiniest flaws. Eventually, the Generator becomes so good that the Discriminator can't tell the difference.

Best for: Real-time generation, image-to-image translation, and specific tasks like upscaling.

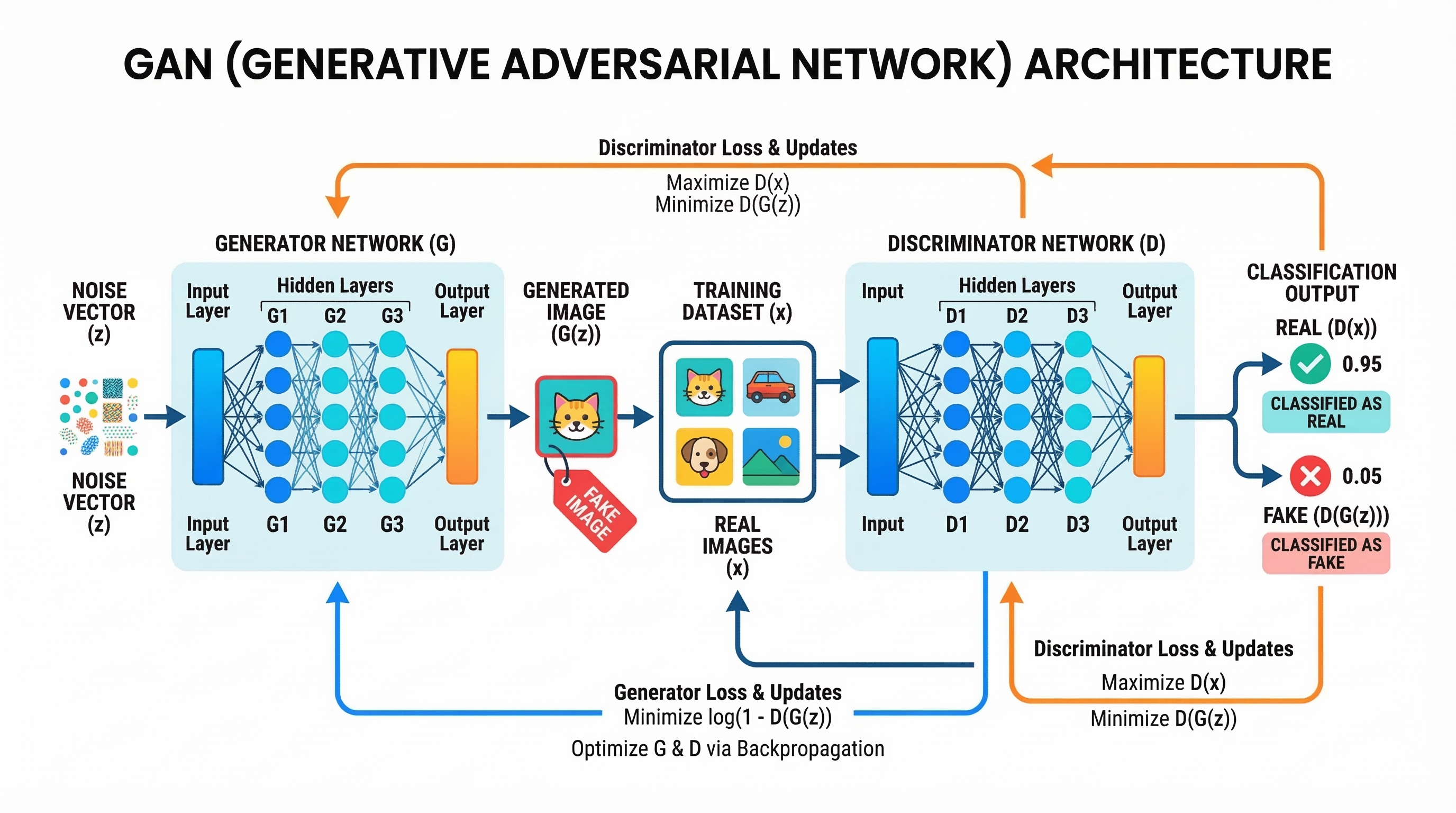

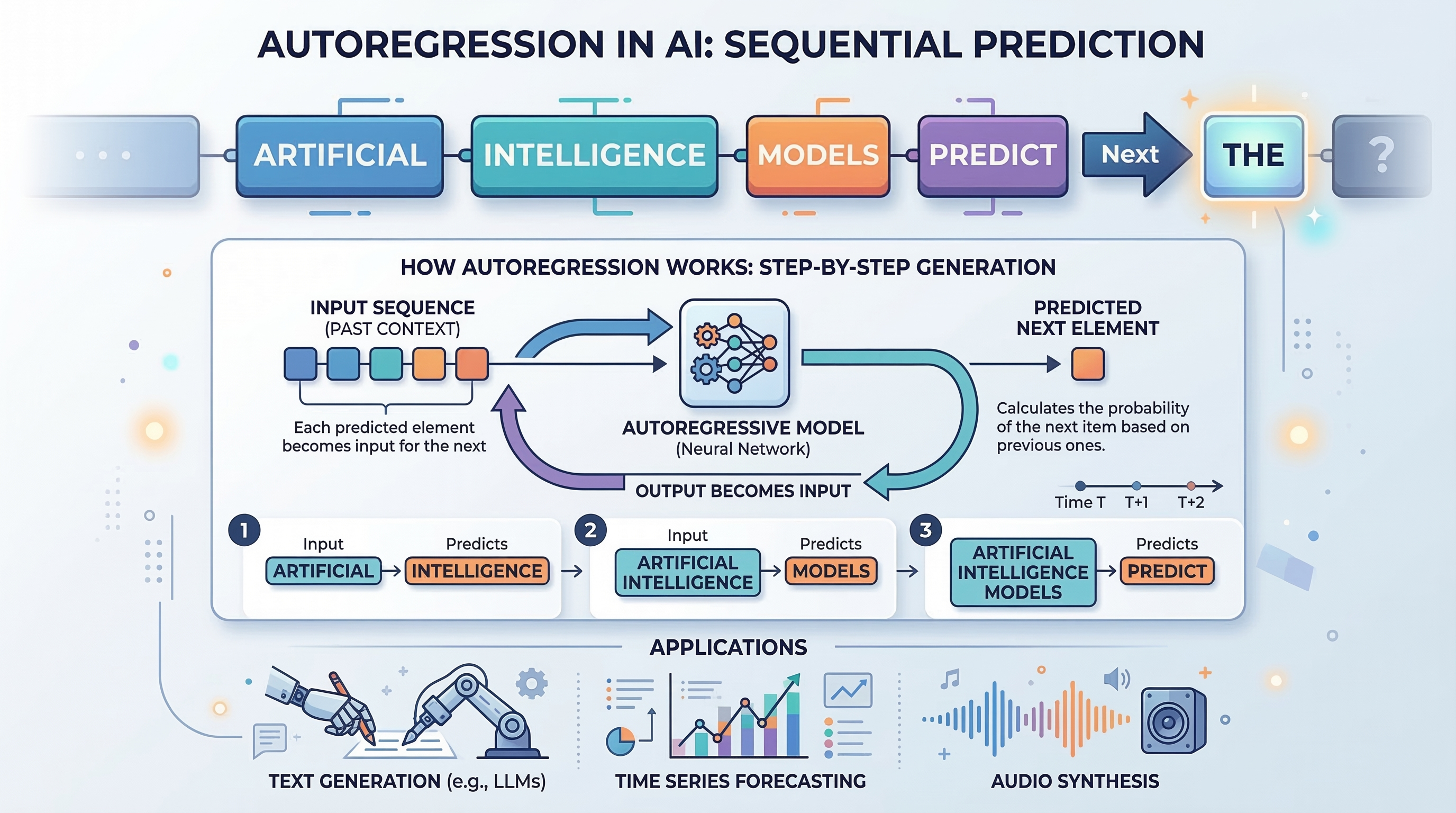

#2. One Step at a Time: Autoregression

Autoregression is the logic behind Large Language Models (LLMs) like GPT, but it's also used in media generation.

The core idea is simple: predict the next piece of data based on all previous pieces.

If you're generating a sentence, the AI predicts the next word. If you're generating an image using an autoregressive model (like early versions of DALL-E or PixelCNN), it predicts the next pixel. It builds the final result piece by piece, token by token.

Best for: Text generation (LLMs), sound generation (predicting audio tokens), and long-sequence consistency.

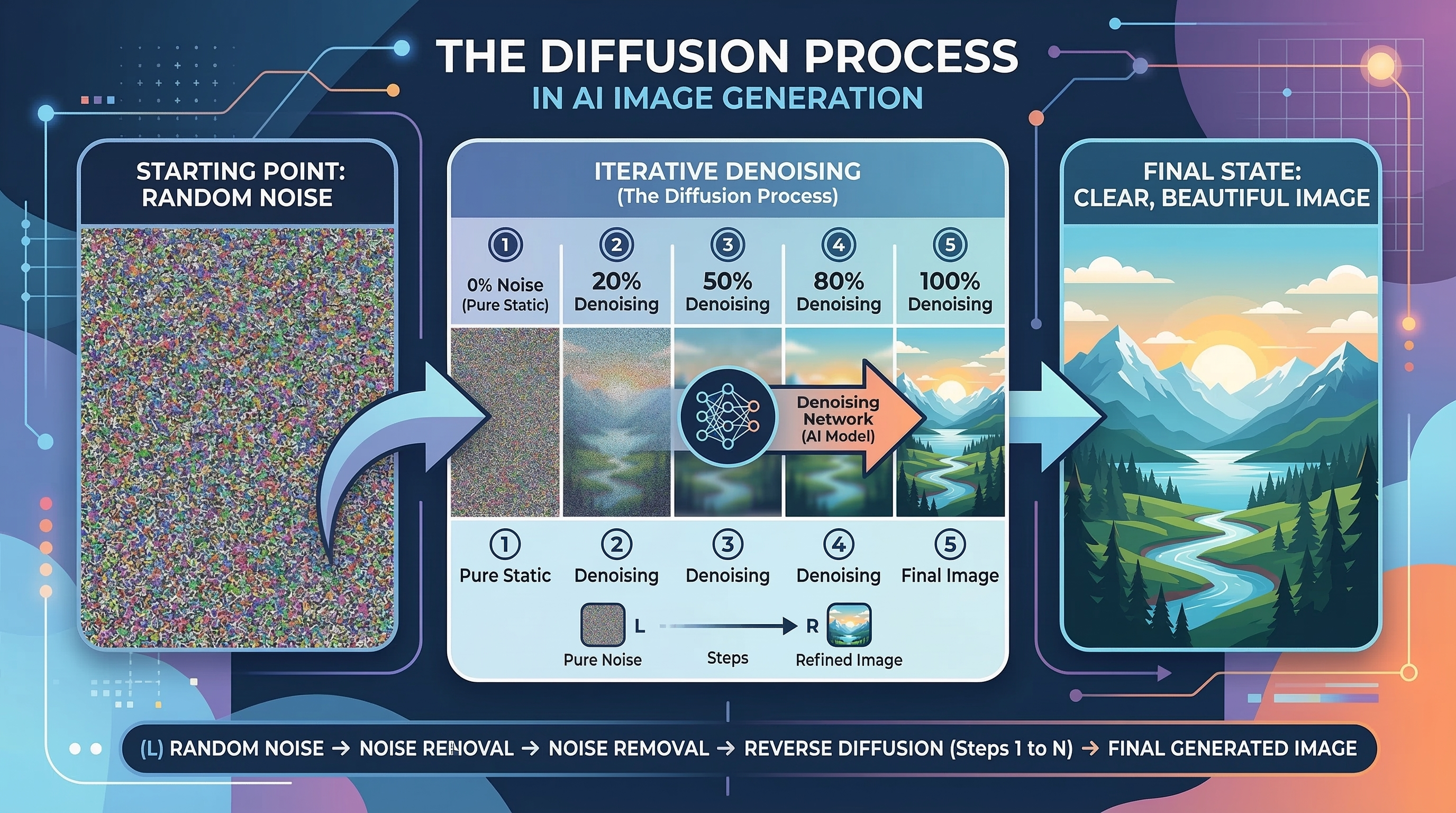

#3. From Noise to Masterpiece: Diffusion Models

This is the technology powering the current explosion of AI art and video (like Midjourney, Flux, and Kling).

Diffusion models work by starting with pure "noise"—think of the static on an old TV screen—and gradually "denoising" it until a clear image emerges.

During training, the AI learns how to reverse the process of adding noise. When you give it a prompt, it starts with a random noise field and "sculpts" the pixels into the shape your prompt described.

Best for: High-fidelity images, photorealism, and complex video generation.

#4. How Video Generation Works

Video generation is essentially "3D Diffusion" or "Temporal Autoregression."

Models like Kling 3.0 or Veo 3.1 don't just generate frames one by one—they have to ensure temporal consistency. This means that if a ball is moving in Frame 1, it must be in a logical position in Frame 2.

The AI uses "attention mechanisms" to look at multiple frames at once, ensuring that physics, lighting, and movement remain consistent throughout the clip.

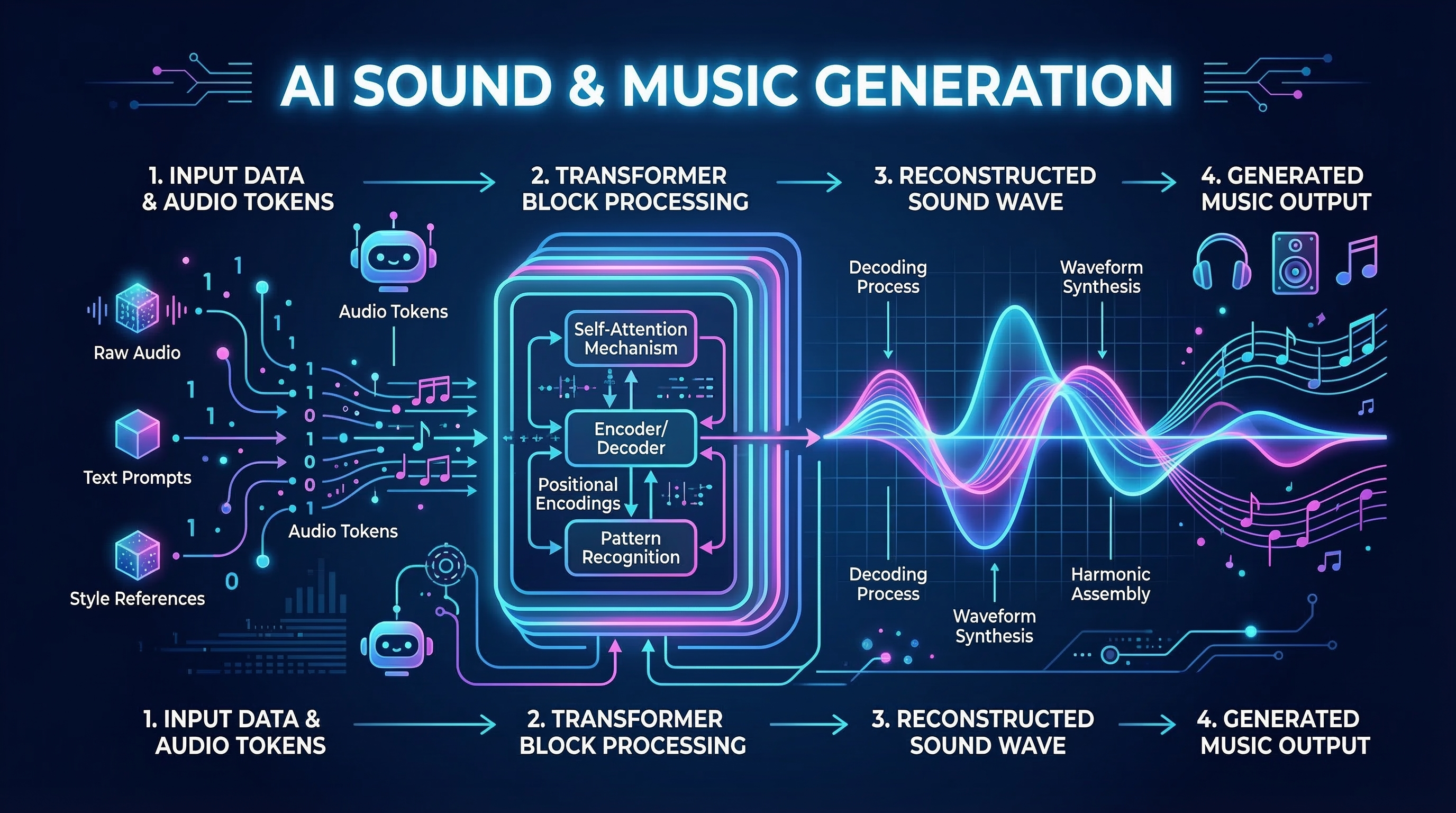

#5. The Rhythm of Data: Sound & Music Generation

Sound generation often combines Autoregression with Diffusion.

Models like Suno (full-song generation) or ElevenLabs (voice) often convert audio into "tokens" (similar to words in text). They predict the next audio token (Autoregression) and then use a "Vocoder" (often a Diffusion model) to turn those tokens back into high-quality sound waves.

#Conclusion: The Future of Creativity

Understanding the "how" behind the "wow" gives you a better edge as a creator. Whether it's the competitive nature of GANs, the step-by-step logic of Autoregression, or the noise-sculpting magic of Diffusion, each architecture brings a unique flavor to the creative process.

At Kubeez, we give you access to all these cutting-edge models in one platform. Ready to start your next generation? Get started today.